What You Should Know When Considering ICER’s Value Assessment Framework

Over the past several months, our Modeling & Evidence team has been busy tracking the Institute for Clinical and Economic Review’s (ICER) movements. In early February 2017, ICER released a revised Value Assessment Framework, the conceptual framework for guiding their evaluations of clinical and cost-effectiveness. After a three month open-comment period, the final ICER Value Assessment Framework for 2017-2019 was posted in June. Below is a recap of our reviews of the revised and the final frameworks, with a focus on ICER’s methodological approach to estimating an intervention’s value for money.

Two-Year Pilot Period for Linkage of Societal Values to the CE Threshold

In ICER’s revised framework, we had five major questions on their approach for estimating their cost-effectiveness thresholds. ICER had proposed to link ‘other benefits or disadvantages and contextual considerations’ – such as unmeasured patient health benefits, relative complexity of the treatment regimen, impact on productivity, among others – to long-term value for money. However, ICER softened this approach in the final framework and instead moved to pilot the linkage for the next two years. Following the pilot, ICER will determine how best to incorporate these societal values into their framework.

Ambiguity in Chronic Severe Conditions

Just before the comment period ended in April, we took a deeper dive into how ICER’s framework compared to industry practice of conducting cost-effectiveness analyses. As experienced health economic modelers, we considered our own experiences in relation to ICER’s framework. We identified a few areas of concern, one of which includes their procedures for deciding which scenario analysis to use as the base case among patients with chronic severe conditions. Within their revised framework, ICER proposed to conduct scenario analyses in which (1) the cost per life-year gained is compared to the cost per QALY gained, and (2) a life-year gained in less than perfect health counts as 1 whole year (based on Nord 2015). If either scenario analysis has a substantial impact on the incremental cost-effectiveness ratio, ICER will seek patient and public comment to help decide which scenario analysis should serve as the base case. We have a number of questions based on this approach. Who are the members of the patient and public stakeholder groups? Will their profiles be made publicly available? What questions will they be asked, how will their answers be scored, and how does the scoring translate to ICER’s decision? Since the base case result will determine an intervention’s value for money, it will be interesting to see more details as they emerge for this challenging process. In their final framework, ICER did not provide more clarification so there remains some ambiguity.

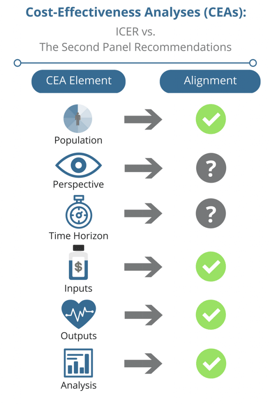

ICER’s Cost-Effectiveness Framework Aligns with the Second Panel Recommendations

Our review of ICER’s Value Assessment Framework led us to investigate the similarities between their guiding principles and the Second Panel’s recommendations. Since ICER continues to gain national attention, it is our assumption that HEOR practitioners will turn to ICER’s framework for guidance when conducting cost-effectiveness analyses. If ICER’s framework deviated from the Second Panel’s recommendations, many may have been left wondering which set of guidelines to follow. Yet, after thorough review of both frameworks, we found that the two approaches share consistent methodologies on most of the CEA elements on our checklist. Thus, the two approaches are relatively well-aligned.

Up Next: ICER vs. NICE’s Guidelines for Conducting Cost-Effectiveness Analyses

We have now shifted our attention to NICE’s guidelines for conducting cost-effectiveness analyses. This fall, at ISPOR EU in Glasgow, Scotland, I will moderate an issue panel that will address the similarities and differences between NICE’s guidelines and ICER’s framework. The panel will include Dr. Pall Jonsson (PhD, MRes), Associate Director, from NICE and Dr. Daniel Ollendorf (PhD), Chief Scientific Officer, from ICER. The session will be held on Wednesday, November 8th. Please feel free to send me any questions you might have for the panel. We hope to see you there!