ICER's New Cost-Effectiveness Threshold Raises 5 Major Questions

Early last month the Institute for Clinical and Economic Review (ICER) released a revised Value Assessment Framework for public comment based on feedback received from patients, clinicians, life science companies, and other stakeholders. While a majority of the proposed changes lacked real luster, there is one proposed change that pharma should take note of: ICER's new method for estimating the cost-effectiveness (CE) threshold.

Proposed Method for Estimating Cost-Effectiveness (CE) Threshold

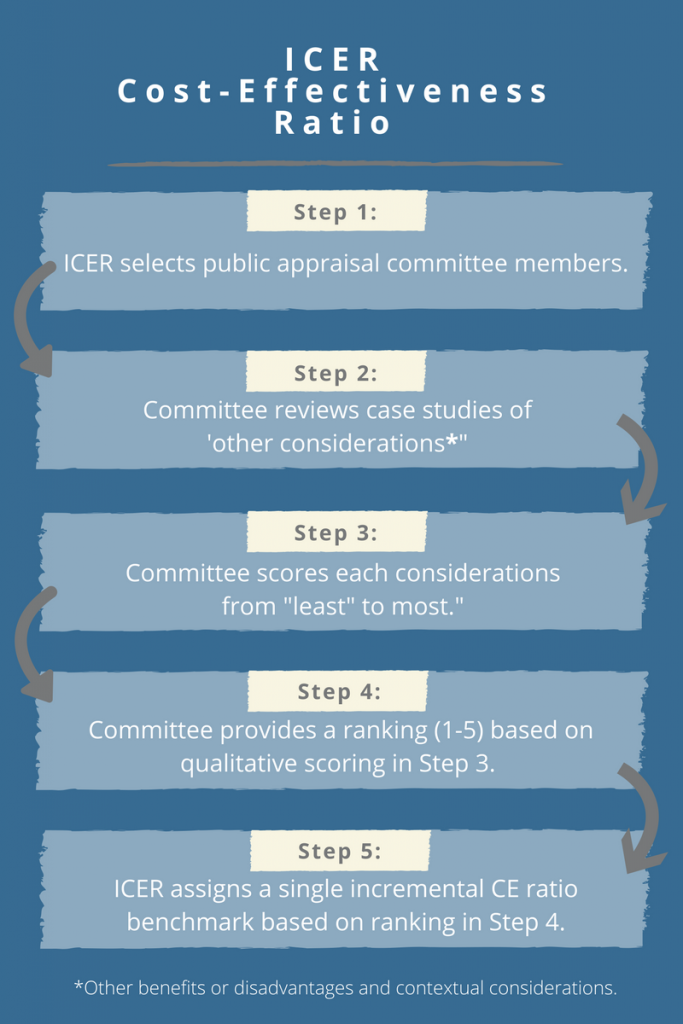

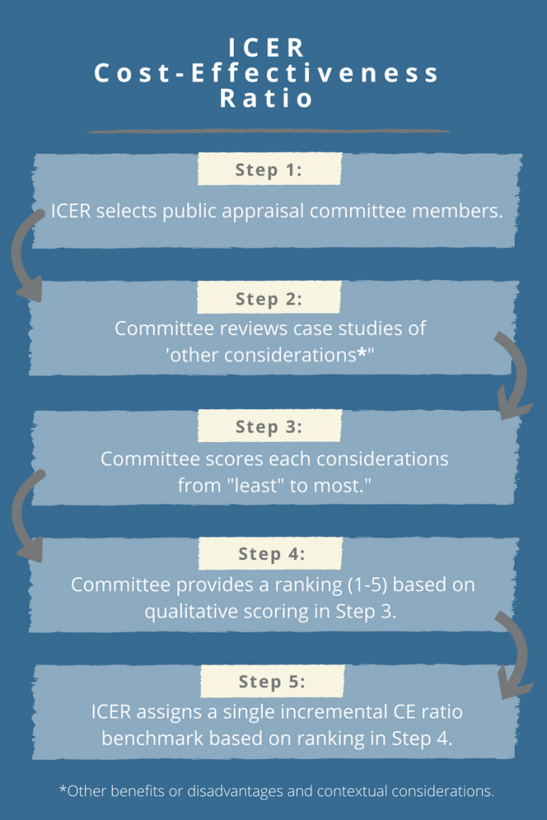

ICER’s proposed approach for estimating the CE threshold attempts to link ‘other benefits or disadvantages and contextual considerations’ – such as unmeasured patient health benefits, relative complexity of the treatment regimen, impact on productivity, among others – to long-term value for money. The approach contains a modified form of multi-criteria decision analysis (MCDA), in which 10 total elements are considered but not quantitatively weighted to yield an average ranking on a scale from 1-5. The average score will, in turn, be used to assign a single incremental CE ratio benchmark from $50,000-$150,000 per quality-adjusted life year (QALY). In particular, ICER proposes the following steps:

Why Should Pharma Care About ICER’s Revised CE Threshold?

The single incremental CE ratio will be used to determine a value-based price, as well as an overall value rating, for each intervention under study. This means that ICER’s findings could place additional downward pressure on drug prices. The potential impact of this proposed change on pharma stakeholders cannot be overstated or de-emphasized: your products will be judged against the CE ratio benchmark.

5 Questions For the ICER Cost-Effectiveness Threshold

During the ongoing public commentary period, pharma should examine every nook and cranny of ICER’s Value Assessment Framework, especially the new CE threshold estimate, and ask for greater clarity where necessary. As a start, here are our five main questions regarding the new threshold approach:

Question 1: Who are the members of the independent public appraisal committee?

Drug manufacturers will need to understand the backgrounds of those directly impacting the CE ratio benchmark, since their initial decisions may ultimately place pressure on drug prices. In particular, pharma should seek to find out:

- What are the profiles of the committee members?

- Will the profiles of each member be made publicly available?

- Is the committee comprised of clinicians, economists, patient advocacy leaders, and/or payers?

- How many committee members are there in total, and how many members are from each stakeholder group?

Question 2: Will the case studies be specific to a therapeutic/disease area, or will they be generic across therapeutic/disease areas?

If the goal of the case studies is to inform scoring for each of the 10 elements on a visual analog scale, the case studies will need to be relevant to the therapeutic/disease area under consideration. Otherwise, generic case studies may lead the committee to score the element in an unintended way.

Question 3: Is it appropriate to link selected ‘other considerations’ focused on societal impacts to direct cost per QALY thresholds?

Selected elements, such as ‘impact on productivity’, ‘impact on caregiver burden’, and ‘impact on public health’, are societal constructs. Yet these societal elements will be used, along with other considerations, to determine an overall ranking leading to a direct cost per QALY threshold. If these societal elements are to be used, perhaps they should lead to a direct plus indirect cost per QALY threshold.

Question 4: Are the overall ranking scores of 1-5 referenced and compared across therapeutic/disease areas or similar treatments?

Embedded in their proposed changes, ICER clearly states that the overall ranking score determined by the public appraisal committee “reflects one group’s judgement”, hinting that a second committee may produce a different score. This raises the question as to how ICER could explain differences in scores on the same topic from two hypothetical committees. Perhaps a committee’s score should be compared to past scores in similar therapeutic/disease or product areas, as a means of checks and balances.

Question 5: Is it appropriate to assign a ‘one-size fits all’ threshold range across diseases and treatments?

It is reasonable to expect, as discussed by Drummond and Sorenson1, that the CE threshold may differ depending on the treatments being evaluated or the patient populations being studied. Consider the case of rare diseases, for which orphan or ultra-orphan drugs may not be cost effective and may exceed ICER’s CE range of $50,000-$150,000 per QALY. Payers may still approve coverage of these therapies, irrespective of a high CE ratio, since they are used to treat life-threatening diseases for which other therapeutic options may not be available. Should these drugs, and others used in special circumstances, be held to a different CE range?

ICER's Value Assessment Framework Comment Period Is Now Open

Although there are many more questions that can and should be asked of ICER regarding proposed changes to its Value Assessment Framework, the cost-effectiveness threshold estimate is the most impactful and controversial and should be given a full review. Take the time and make comments. We’re submitting these questions, but ICER needs more feedback. Once this change and all others have been ratified, ICER will not revisit their methodology until April 2019. Now is your opportunity to inspect, comment, and critique the new CE benchmarking approach before the public commentary period ends on April 3rd, at 5pm ET.

1Drummond M, Sorenson C. Nasty or NICE? A perspective on the use of health technology assessment in the United Kingdom. Value in Health. 2009; 12(2):S8-S13.